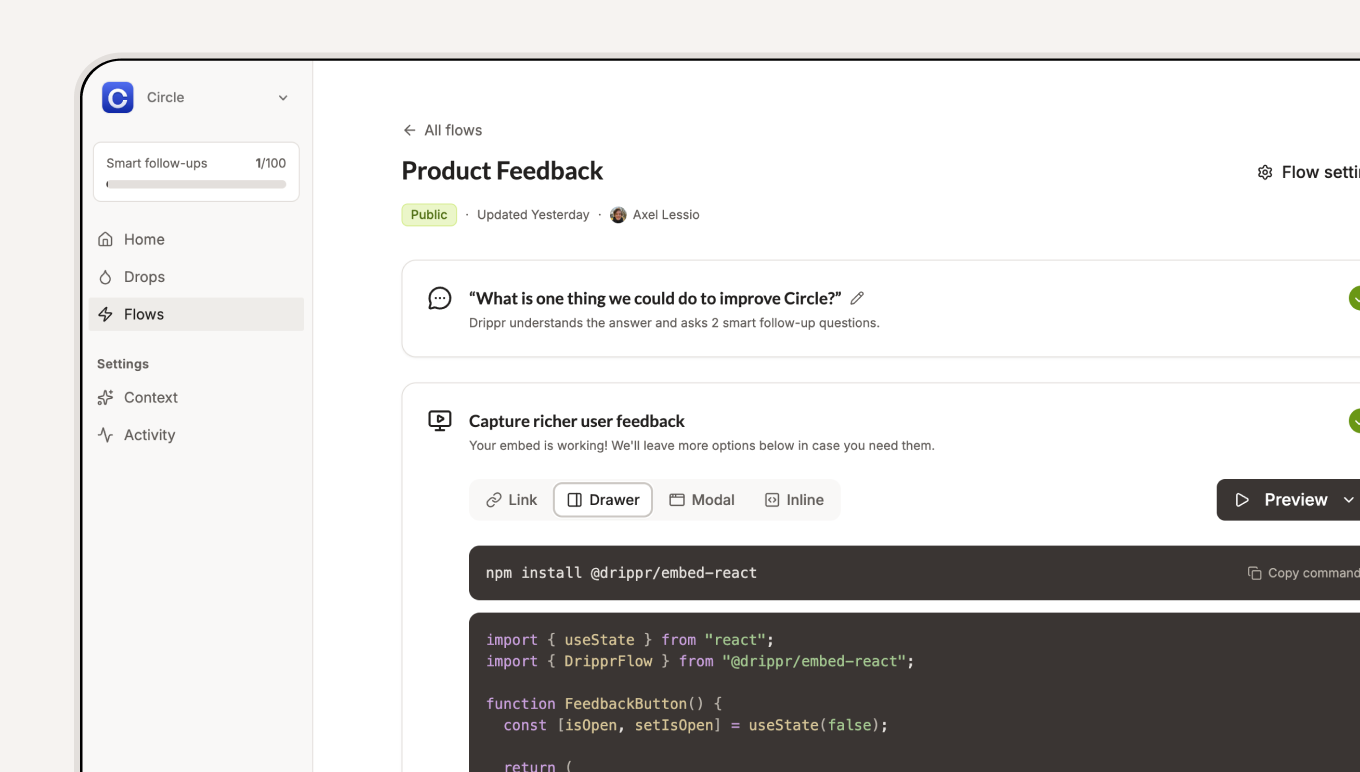

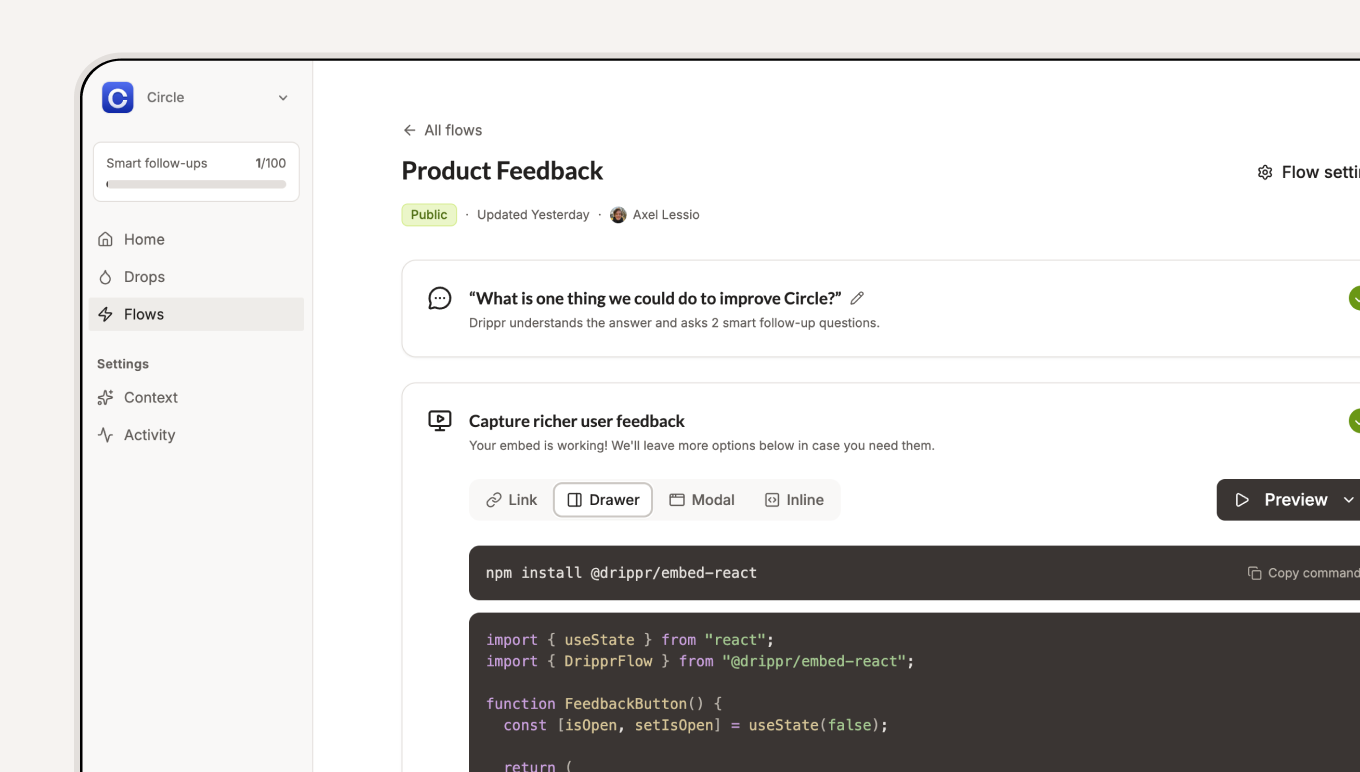

I'm building an AI-native SaaS tool to help teams collect richer feedback.

There's a gap between deep research and feedback forms. On one side, in‑depth interviews have high cost and low scalability.

On the other, in‑app surveys and feedback widgets are scalable, but shallow:

Most people involved have good intentions. The system is the problem.

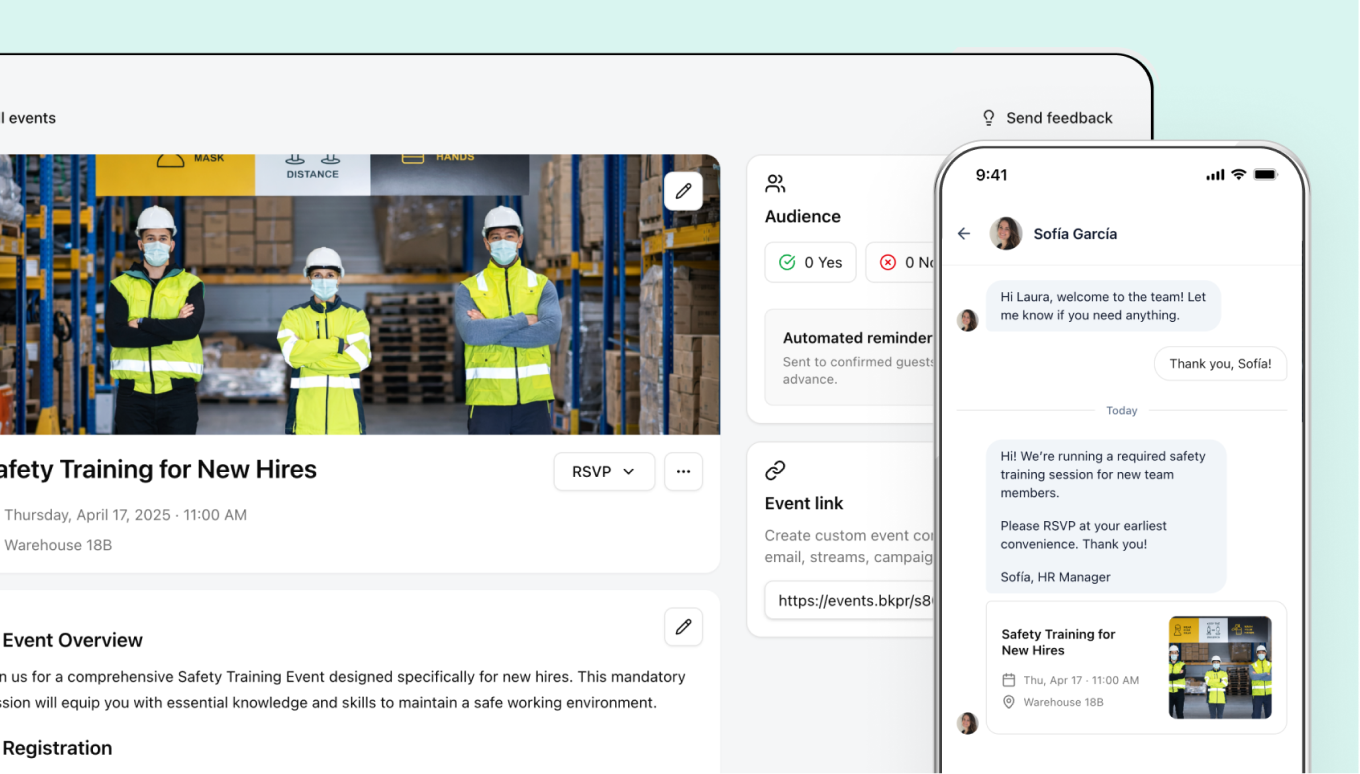

It starts with a low-friction question, then follows up with 2 highly relevant questions to extract more depth.

The participant experience is simple and very human.

For that magic moment to happen, admins need to go through a guided setup I designed balancing clarity and feasibility.

Some inputs are participant‑facing, like company name and logo. Others are essential to provide Drippr with the right context.

AI helps admins with this step by generating company context and personas.

During testing, I was manually generating context and personas with ChatGPT anyway. Automating that step made the product better and more honest.

Isn't this feature too much for an MVP scope?

MVP here doesn’t just mean “works for users.” It also means “doesn’t kill the business before value is felt.”

The problem is: friction before first value kills momentum. Trust isn’t there yet, and intent is fragile.

So onboarding balances two risks:

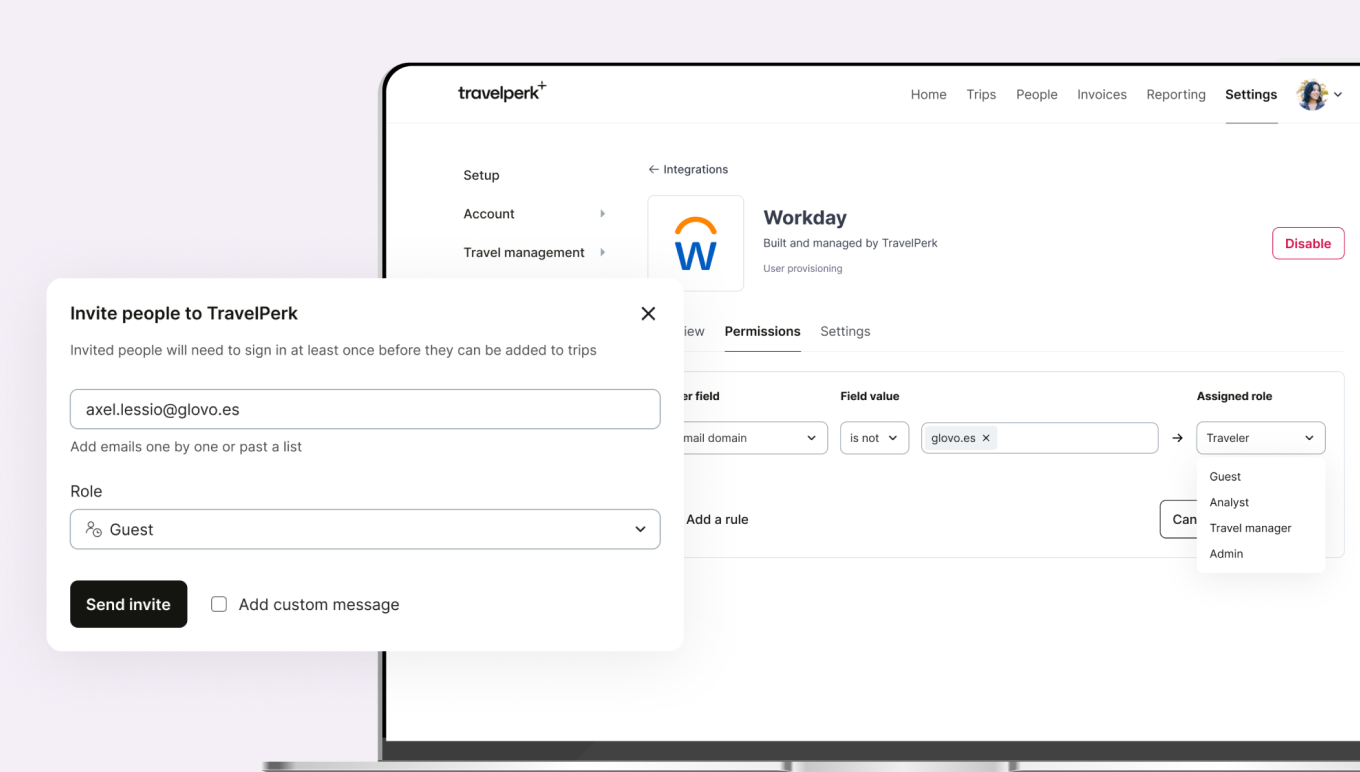

To experience real value, teams need to:

The 'aha!' moment is when real feedback flows through the system and reaches the places where insights already live.

That constraint keeps the product honest, even if it makes activation harder.

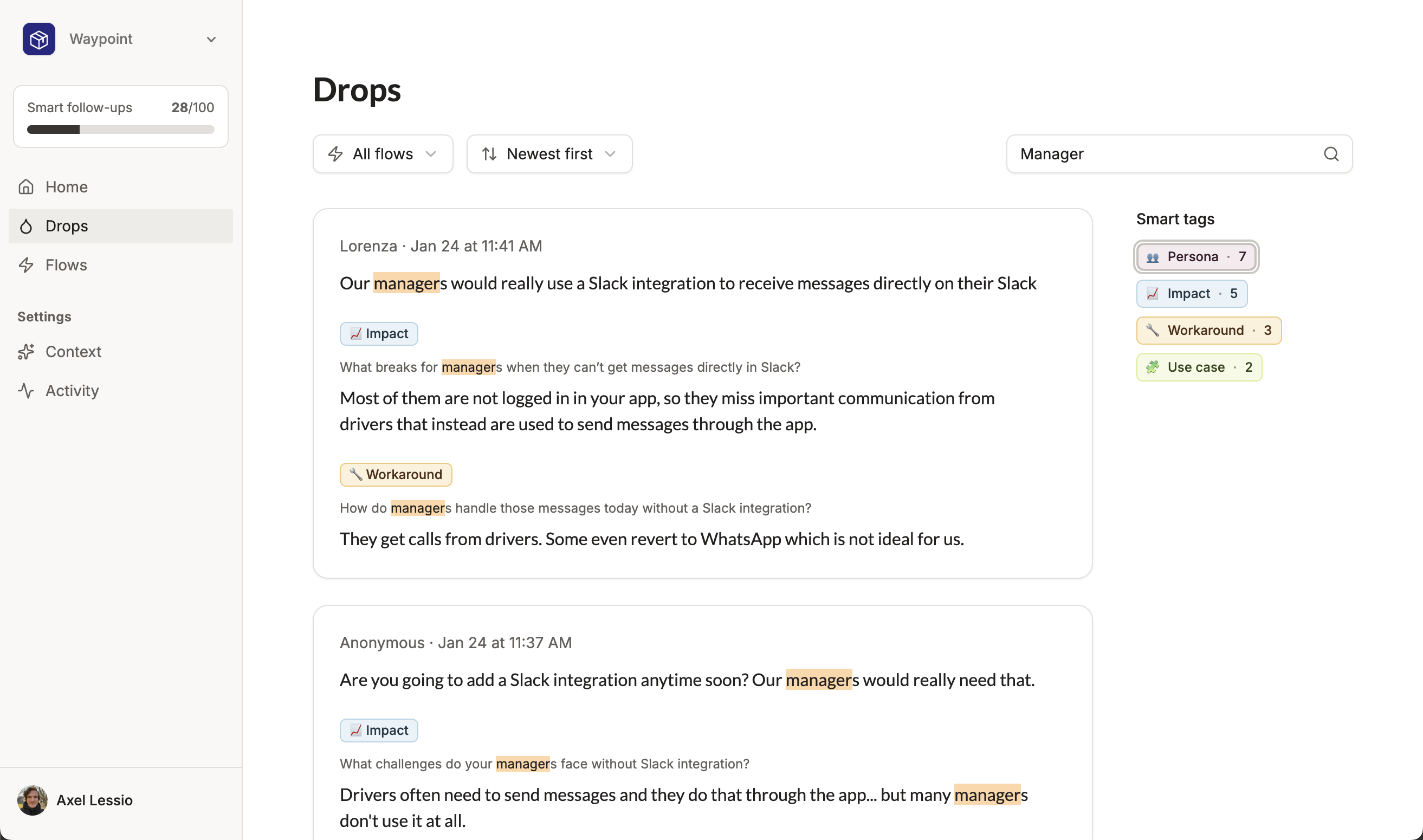

Instead of scanning lists of requests, they can look for patterns in:

* * *

I didn’t start Drippr to learn to code.

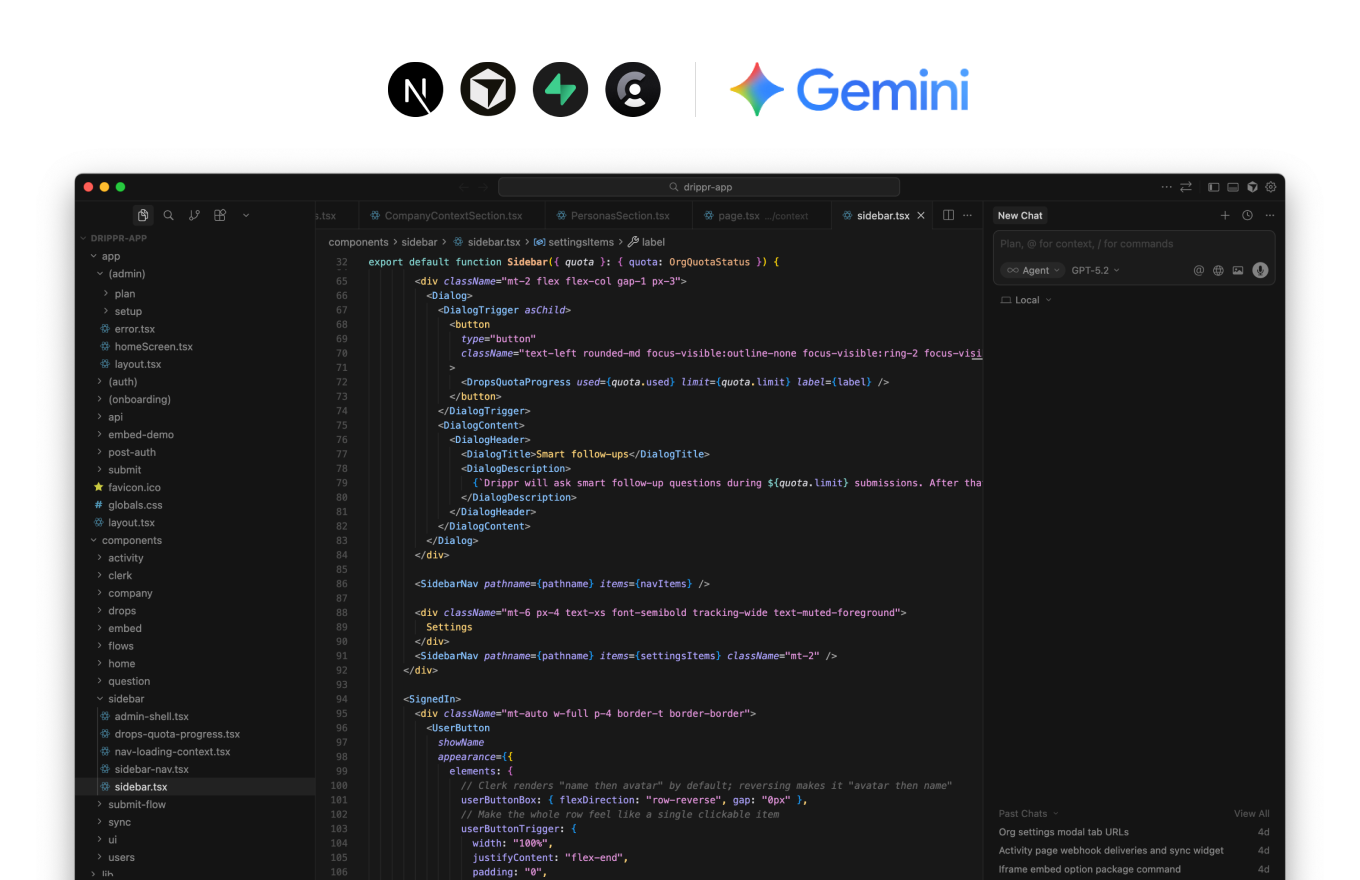

I started because I really wanted to solve the problem. But I couldn’t hire a developer. Using Cursor + AI became a means to an end.

Over time, it allowed me to ship things I wouldn’t have attempted before:

The constraint shaped the product... and also the way I now think about shipping.

...but I held myself accountable.

“The priority rating for each request is pretty much always urgent and important. It’s not a metric we swear by.” – PM during a discovery call

* * *